Features

+ 1’024 3-axis hall sensor arrays

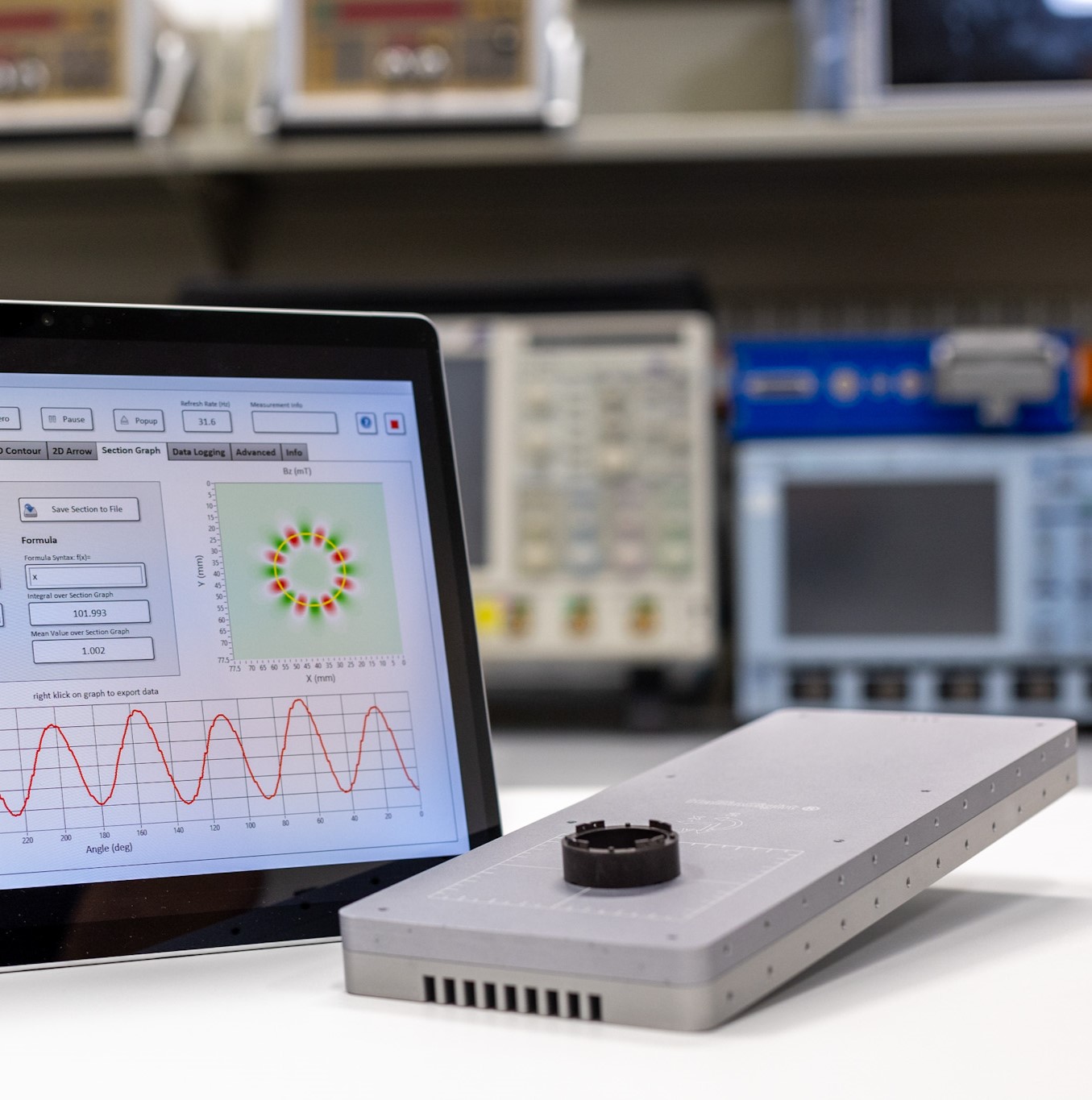

+ Real-time mapping of Bx, By, and Bz

+ Standard calibration up to 15 mT

+ Dynamic characterization of magnetic fields

+ Measurement ranges: 100, 400, 800, 2,000 mT

Features

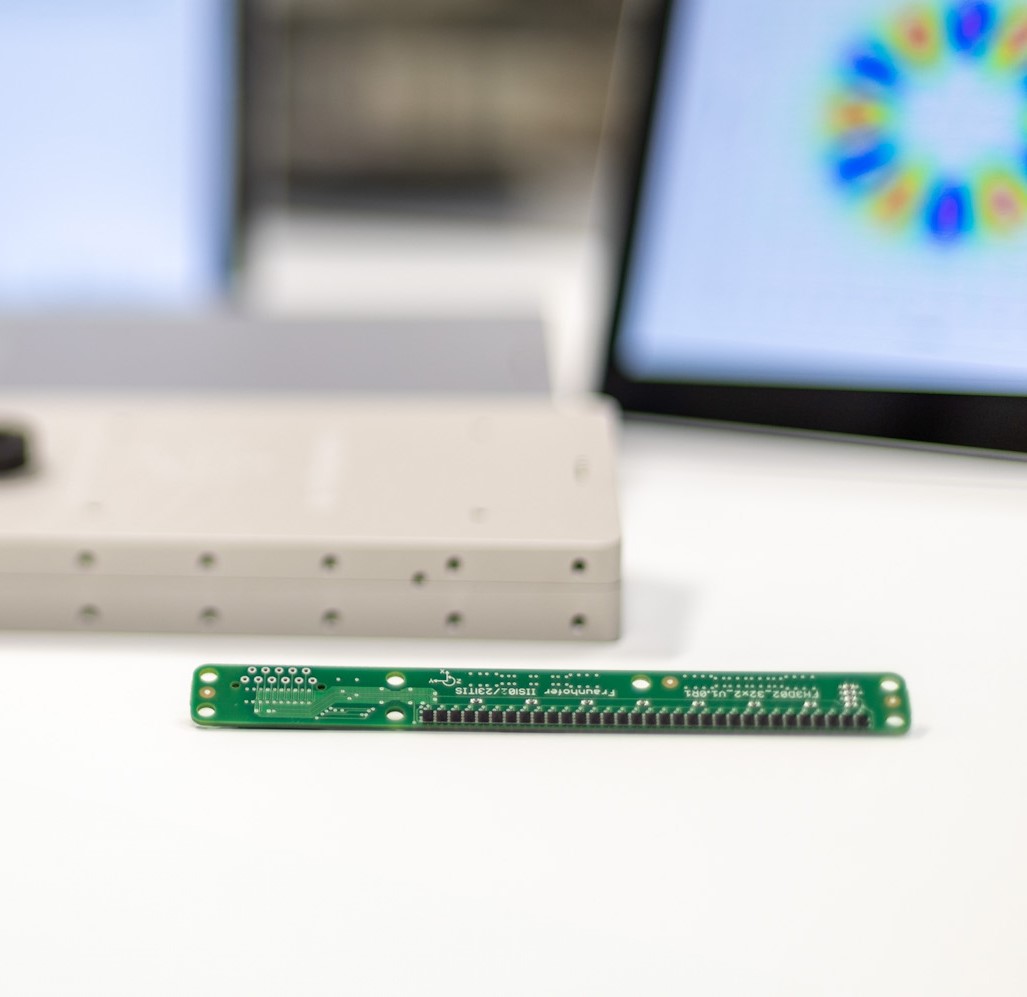

+ 1’024 3-axis hall sensor arrays

+ Real-time mapping of Bx, By, and Bz

+ Standard calibration up to 15 mT

+ Dynamic characterization of magnetic fields

+ Measurement ranges: 100, 400, 800, 2,000 mT

HallinSight® technology, a true 3D-Hall magnetic field camera ready for use.

By making the invisible visible through true three-axis magnetic field cameras, HallinSight® systems map dynamic magnetic fields in real time. Networks of 32×32, 16×16, or 32×2 three-axis Hall sensors measure from a few µT to several T at frequencies of 25, 100, and 250 Hz, respectively.

HallinSight® is a technology developed by the Fraunhofer Institute for Integrated Circuits.